Cost optimization is key to creating a well-architected and efficient AWS environment. In fact, it is one of the critical pillars of the AWS Well-Architected review. Let’s talk about the AWS Instance Scheduler solution which manages Ec2 and Rds instances.

AWS provides a lot of services for cost optimization e.g Using spot instances to get up to 90% of cost savings or using saving plans to get discounts on Compute spending for up to 70%.

However, to really take advantage of AWS’s Pay-as-you-go pricing model, architects need to understand what is the average utilization is on their AWS account and create solutions to turn off unused resources automatically and consistently to avoid waste.

The most common resource creation pattern across major and minor enterprises consists of multiple environments that are decoupled across many AWS accounts, this decoupling pattern through AWS accounts is a best practice for AWS clients, and adds a layer of complexity for an efficient FinOps cost monitoring and cost optimization.

Most organizations will also have one or many development environments where developers deploy new features, test code, and see how new deployments work in collaboration with old features, etc.

There are also the test environments (where new features are tested by QA and product testers) as well as a staging environment, an environment that mimics the production environment that is used to test out new deployments, reducing any downtime on the production for a seamless customer experience.

However, apart from production, none of these environments are usually needed after office hours and especially not on the weekend when there is no new development or testing. Therefore architects could create a solution that would power off any resource after business hours and over the weekend.

Let us take out our calculators. Suppose we have one EC2 instance each running in each environment. That means we have 4 instances (1 each for dev, QA, stage, and production) that run 168 hours each. A c5.xlarge ( a commonly used EC2 instance type for computing loads) costs around 0.17$ per hour. So the normal cost to run the above would be 114$ per week.

However, if an architect creates a scheduled automation script that would turn off the instances for non-production environments every day at 6 PM and over the weekend, and turn them on each day at 9 AM. That reduces the total running hours to 45 (9 hours each working day, with resources, shut down over the weekend). That cost for running the whole account reduces to 36.21$ per week!! This is an overall saving of 103.5%.

AWS provides the AWS instance scheduler, a Cloudformation template that creates all the resources you need to automate your instances stop and start.

The instance scheduler is an AWS implementation available for free in their solutions page, you can browse to the solutions page and read about more documentation, launch the stack from there or if you are interested in how everything is set up, download the cloud formation link and log into your AWS account.

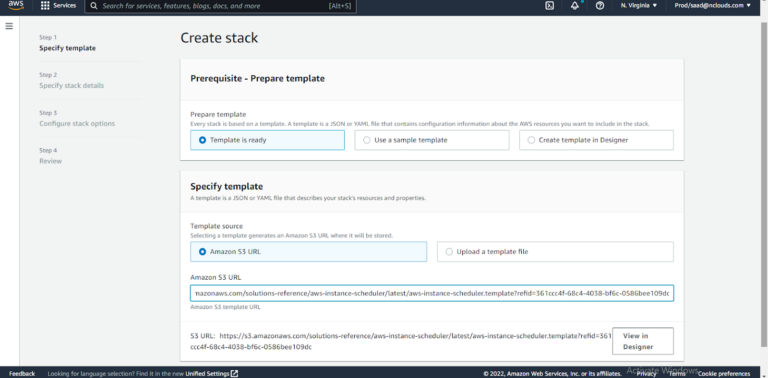

Navigate to the AWS Cloudformation page and either browse to the location where the cloud formation template is saved or provide the link above in the s3 URL page as shown below

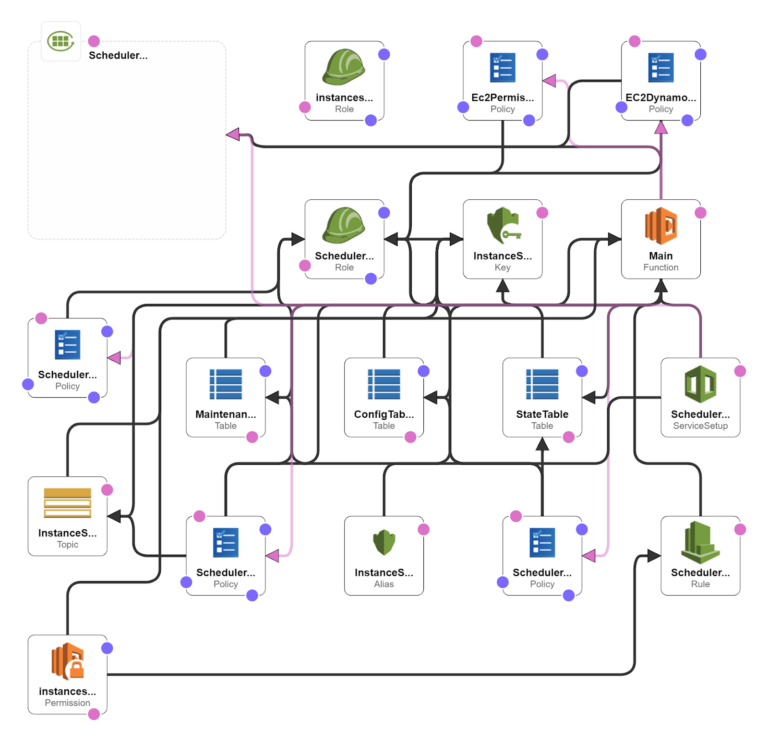

You can additionally click the view designer page and paste the template there to see all the resources and the infrastructure diagram.

If you want to take a look at the infrastructure diagram of the solution provided, you can click “View in Designer”.

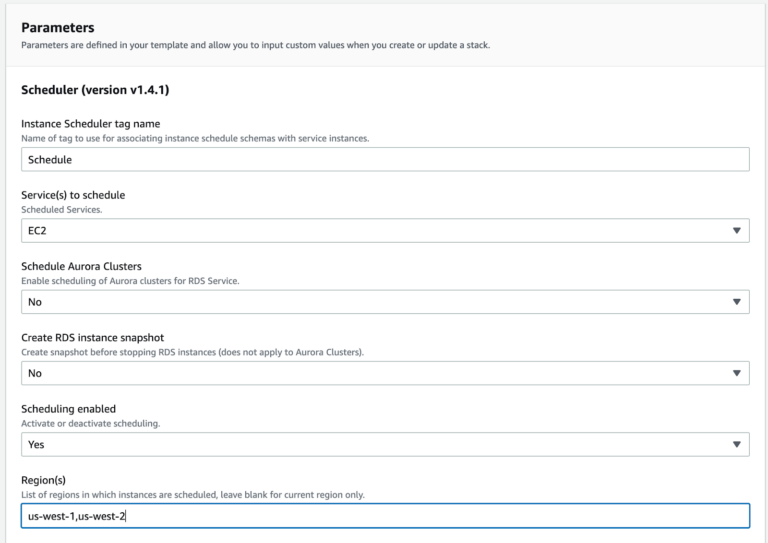

Click Launch stack and give it a name and define the parameters.

The scheduler tag allows you to define a custom tag that the script will use to identify which instances need to be shut down and started. So you could tag infrastructure with key-value pairs like “Environment: Non-prod” and any non-production instance will be picked up by the solution.

The services parameter allows you to define EC2, RDS, or both to be a part of the resource scan.

There are other multiple options to increase the flexibility of the solution, for example targeting only particular regions or allowing the exercise to be run across accounts as well since string AWS implementations have separate accounts for separate environments and purposes.

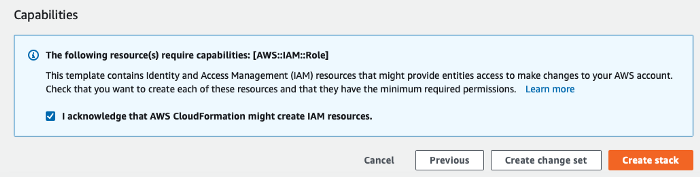

The CloudFormation will ask for an acknowledgment to create new IAM resources, Since the template and solution come directly from AWS, we can provide that permission.

Go ahead and create the stack. After about 4-5 minutes, the stack should create all required resources. You can check the output to see the different resources

All the working parts needed are now present on your AWS account. The next step is to create the schedules. DynamoDB is the AWS resource used to manage that. You could mess around the table, or use the preferred way which is the AWS Scheduler CLI.

To do that, go to this link and download the Scheduler CLI. After you unzip it, simply install the utility with the setup.py script.

python setup.py install

Once the installation is complete, we can start to create the schedule.

The process works using two components, a Schedule and a period. The schedule is the cycle that the lambda function will scan against. Each schedule can have multiple periods which is the particular time that acts as a trigger.

So let’s say we want to recreate our cost scenario from above. Here is how that would look

scheduler-cli create-period --stack <stackname> --name mon-start-8am --weekdays mon-fri --begintime 8:00 --endtime 17:59

scheduler-cli create-schedule --stack <stackname> --name mon-9am-fri- 5pm --periods mon-start-8am –timezone America/New_York

You can add multiple periods if you have a set of start/stop times. Since there are working components to the solution, there is a cost associated with the solution. You can find the complete breakdown of the cost here. However, given the savings you can generate this solution will pay for itself and won’t add additional operational burden to your teams because it is fully automated once set and configured.

Using the AWS instance scheduler allows engineers and architects to take advantage of AWS’s pay-as-you-go model, configuring a quick solution that is created with AWS best practices to shut down resources that are not needed automatically, which results in increased savings and cost optimization.